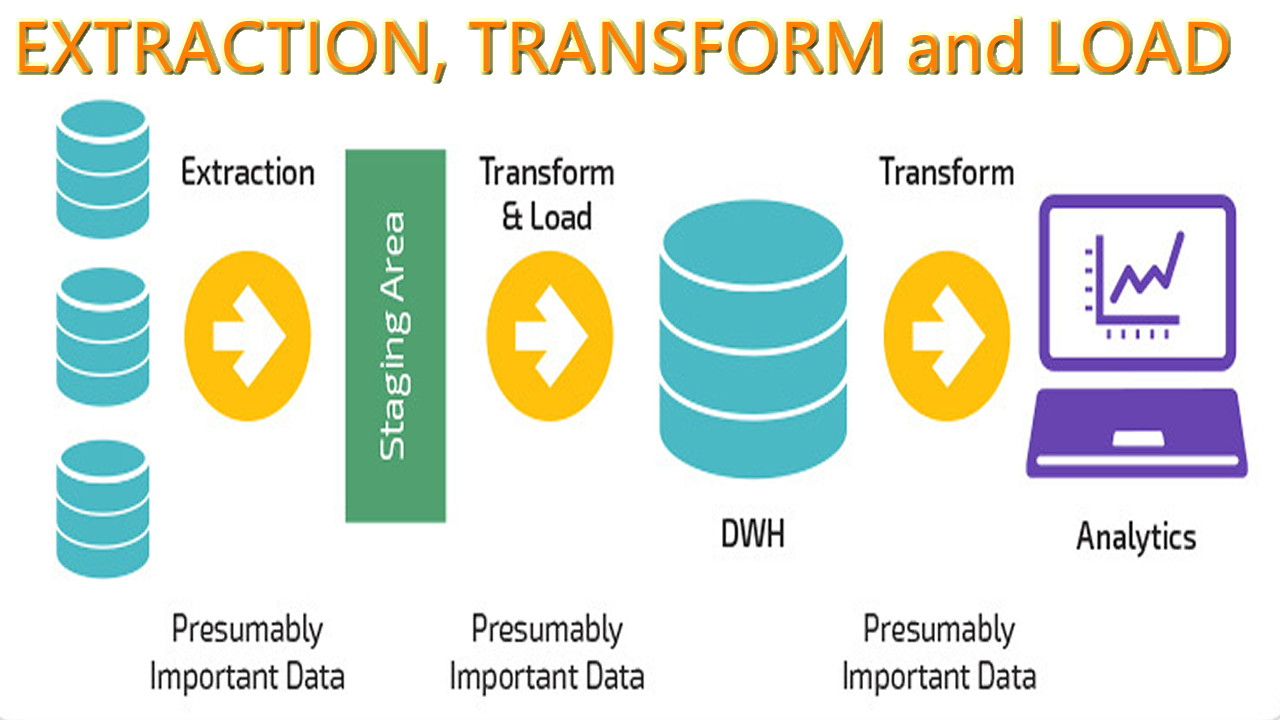

This process has been automated, and can be set up to send initial data to the warehouse, then periodically upload new changes, and if necessary send a full data refresh.įour general ETL tools categories exist today. In this step, the transformed data is then migrated to the data warehouse. Formatting data into appropriate data warehouse schemas.Encrypting, protecting, censoring data in accordance to regulations.Data calculations, translations, and summations.Filtering, cleaning, deduplication, data validation, data authentication.Typical data processing in the transformation stage include: Transform - Within the staging server, raw data is processed to conform to predefined data types, which are based on the analytical needs of the business. Flat files like spreadsheets, or CSV files.Data can come from structured and unstructured sources, which may include: Improves productivity, enhances features, reduces learning curveĮTL works in three main stages, Extract data, Transform data, and Load data into the data warehouse.Įxtract - During this stage, raw data is copied or pulled form external data sources into a staging server where further transformations are made on it.Enhances a common central data repository.Combines data domains in ways previously unimagined.Enables Business Intelligence improving decision making.Provides deep historical business context.While ETL can be performed by multiple extraction, transformation, and loading tools, ETL suites save time and offer exceptional features that have reduced the complexity of ETL, and assists in several ways: It is the need to combine structured and unstructured data that has led to applications and suites of tools that help achieve ETL processes more efficiently and effectively. Structured data has been standardized, such as product information which clearly separates product name, description, dimensions, etc., and can be placed alongside similar entries in a structured database, like an RDBMS.) (Unstructured data is data like social media, images and photos, and audio-formats that do not readily conform to a data model. Normally, data pulled from sources like data lakes are unstructured, and then are combined with other data sources that are structured to form insights across disparate data domains. Synthesized data is then placed in a repository, like a Data Warehouse, Data Mart, or Data Hub, for use by end data users.Īn ETL process, or similar process, is essential for ensuring data consistency and integrity before it is loaded into a storage system. ETL describes the basic data integration process used to collect and then synthesize data from multiple sources that do not typically share data types. Trusted Data Discovery, Observability and ReliabilityĮTL stands for Extract, Transform, and Load.Know Your Data for Privacy and Compliance.Dynamic Data Migration for Hybrid Cloud.

Infrastructure for Cloud-Native Applications.Extending Data Center with Performance Near Cloud.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed